pdoc: Auto-generated Python Documentation

This short post looks at pdoc, a lightweight documentation generator for Python programs, provides an example used on the logline docs page, and explores some advantages of automatic docgen for the pragmatic programmer.

Radical simplicity

pdoc is a super simple tool for auto-generating Python program documentation.

Python package

pdocprovides types, functions, and a command-line interface for accessing public documentation of Python modules, and for presenting it in a user-friendly, industry-standard open format. It is best suited for small- to medium-sized projects with tidy, hierarchical APIs.

It's a pip package, so a simple command-line installation will have you up and running in seconds. pdoc is a lightweight alternative to Sphinx with minimal cognitive overhead. If you love Markdown you'll probably love pdoc. And if you don't love it, you'll like it.

Less is more

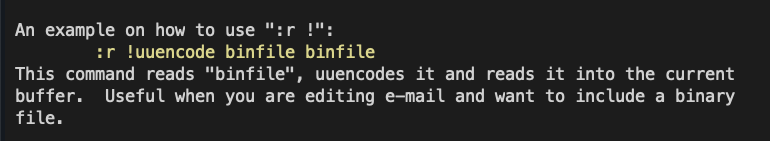

You can output your documentation in PDF or HTML format with a simple flag:

pdoc --PDF filenamepdoc --HTML filenameThe PDF option is a personal favorite because it produces a nice LaTeX document that would make Donald Knuth smile. You can even push it to the arXiv and pretend you're a physicist.

Autogenerated documentation is not exactly the Platonic ideal of literate programming, but if you have well-docstringed modules, functions, and classes, pdoc produces a user-friendly breakdown of your code into clean, modular sub-headings.

The AKQ Game

The Ace-King-Queen Game is a toy model poker game written in Python. You can check out the GitHub repo here. It's a command-line game that contains the essence of hand ranges, bluffing frequenies, and calling frequencies, in a cartoonishly simple three-card game for two players. This implementation pits the human player against an "artificially intelligent" computer player.

I used pdoc to auto-generate the HTML documentation for the game. A few tweaks and it was fit to publish on the logline website.

The Overview Effect

Per Wikipedia:

The overview effect is a cognitive shift in awareness reported by some astronauts during spaceflight, often while viewing the Earth from outer space.

On a more mundane plane, checking out your code at a high level can be clarifying, where you inspect the atomic elements of a script neatly arranged and indexed. The beauty of pdoc is how lightweight and fast it makes the docgen process. You can use it as part of an iterative development workflow — to take a periodic birdseye view of your code and survey the big picture.

Function-wise inspection of a program makes a lot of sense. If all the function names and docstrings pass the smell test after a quick run through pdoc, you're probably on the right path. Otherwise, it can help weed out faulty logic or potential missteps before they sabotage your script.